Hermes Agent in 5 Minutes: The One-Command Setup Guide

Yesterday I published a collection of real user stories from people who tried Hermes Agent alongside OpenClaw. The most consistent thing they said: setup was fast.

So I figured: let’s test that claim. Here’s the actual walkthrough — from zero to a working Hermes session.

Prerequisites

Before you start, check two things.

| Requirement | How to check |

|---|---|

| OS | macOS (Intel or Apple Silicon), Linux (Ubuntu 22.04+), or Windows via WSL2 |

| Python 3.11+ | Run python3 --version in your terminal |

If Python comes back below 3.11, upgrade it first. On macOS, brew install python@3.12 works. On Ubuntu, sudo apt install python3.12. On WSL2, same as Ubuntu.

Everything else — dependencies, virtual environments, PATH configuration — the installer handles for you.

Step 1: Install (One Command)

Open your terminal. Copy this entire line and hit Enter:

curl -fsSL https://raw.githubusercontent.com/NousResearch/hermes-agent/main/scripts/install.sh | bash

That single command does five things behind the scenes:

- Checks and installs missing Python dependencies

- Clones the Hermes source code to

~/.hermes - Creates an isolated virtual environment

- Installs all required packages

- Adds the

hermescommand to your PATH

When it finishes, reload your shell:

source ~/.zshrc # or source ~/.bashrc

Then confirm it worked:

hermes

If you see a prompt or a message about model configuration, you’re in. Move to Step 2.

Step 2: Configure Your Model

Run the setup wizard:

hermes setup

It walks you through a series of choices in your terminal — no config files to edit manually. The key decision: which LLM provider to connect.

Option A: Cloud (Fastest Start)

Pick OpenRouter, Anthropic, or OpenAI. Paste your API key when prompted. Choose a model. Done.

If you want something cheap to test with, OpenRouter gives you access to lightweight models at fractions of a cent per query.

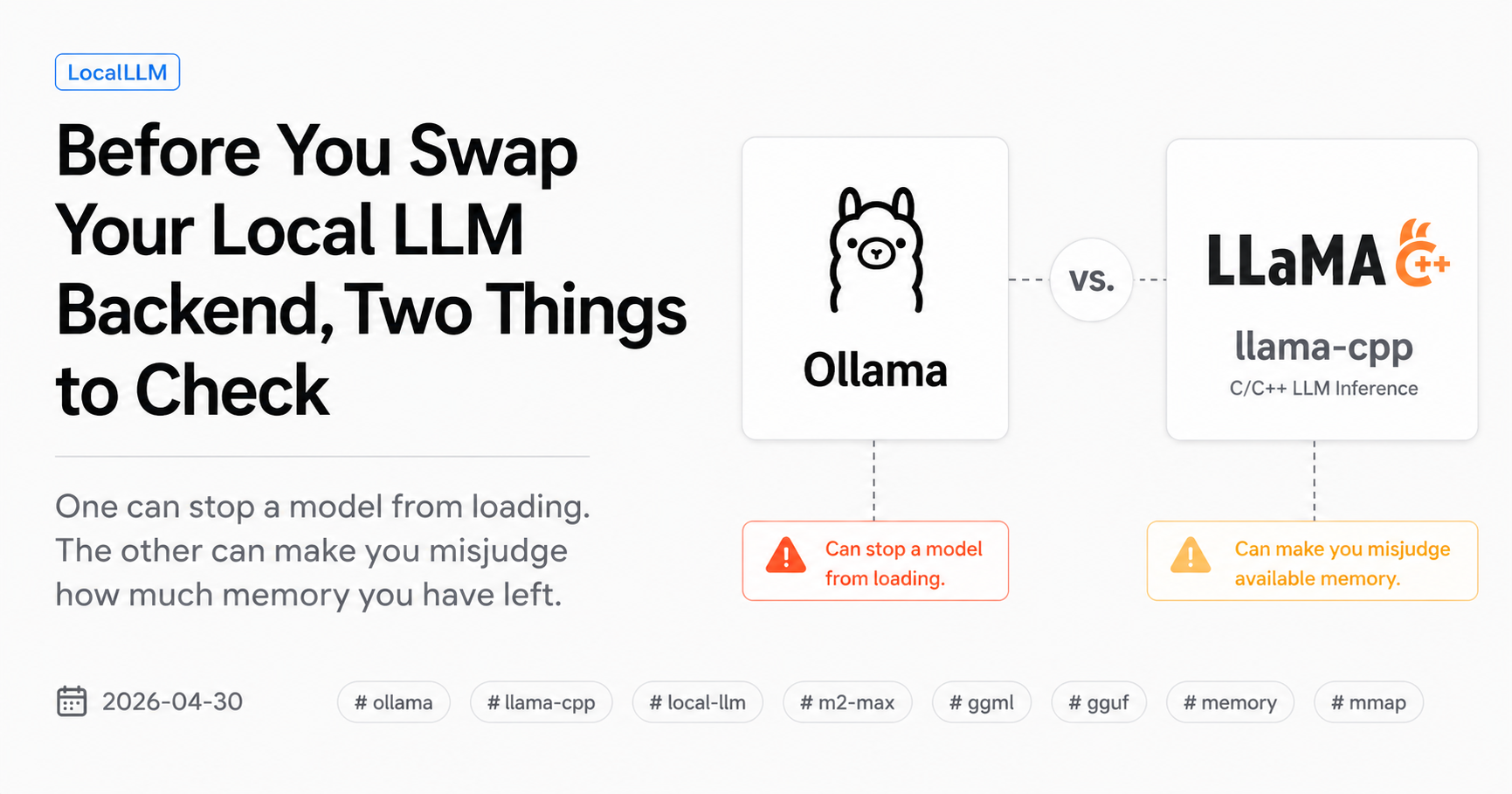

Option B: Local LLM via Ollama

If you’re already running Ollama (and if you’re reading this blog, there’s a good chance you are):

hermes model

Set the endpoint to:

http://127.0.0.1:11434/v1

Leave the API key empty. Pick whichever model you have pulled — Qwen, Gemma, Llama, anything Ollama serves.

This is the setup I’ll be testing against my own OpenClaw + Ollama stack.

Step 3: Choose Your Tools

hermes tools

This shows a menu of capabilities you can toggle on or off:

- File operations — read, write, search files on your machine

- Browser — web search and page reading

- Code execution — run scripts in a sandboxed environment

- Email, calendar — if you plan to use it as a daily assistant

For a first test, file operations and code execution are enough. You can always come back and enable more later.

Step 4: Start Chatting

hermes

That’s it. You’re in a live session. Try something simple:

- “Summarize the files in this directory”

- “What’s in my README?”

- “Draft a short email about project status”

Hermes will pick the right tools, ask for confirmation before anything destructive, and show you exactly what it’s doing at each step.

When Things Go Wrong

Three issues that trip up most first-time users:

hermes: command not found

Your shell didn’t pick up the new PATH yet. Run:

source ~/.zshrc # or ~/.bashrc

If that doesn’t fix it, close your terminal completely and open a new one.

Model connection errors

If Hermes says it can’t reach your model, double-check:

- Cloud: Is your API key valid? Does it have credits?

- Ollama: Is Ollama actually running? (

ollama listshould return models) - Endpoint: For Ollama, use

http://127.0.0.1:11434/v1— the/v1matters

Re-run hermes setup or hermes model to fix configuration. Nothing gets wiped — it just overwrites the setting you change.

Python version too old

If the installer complains about Python, check with:

python3 --version

You need 3.11 or higher. Upgrade through your package manager, or use pyenv if you need multiple versions side by side.

What Comes Next

Once you’ve confirmed Hermes runs and responds, the real value starts building over time. Here’s what you can explore after the basics:

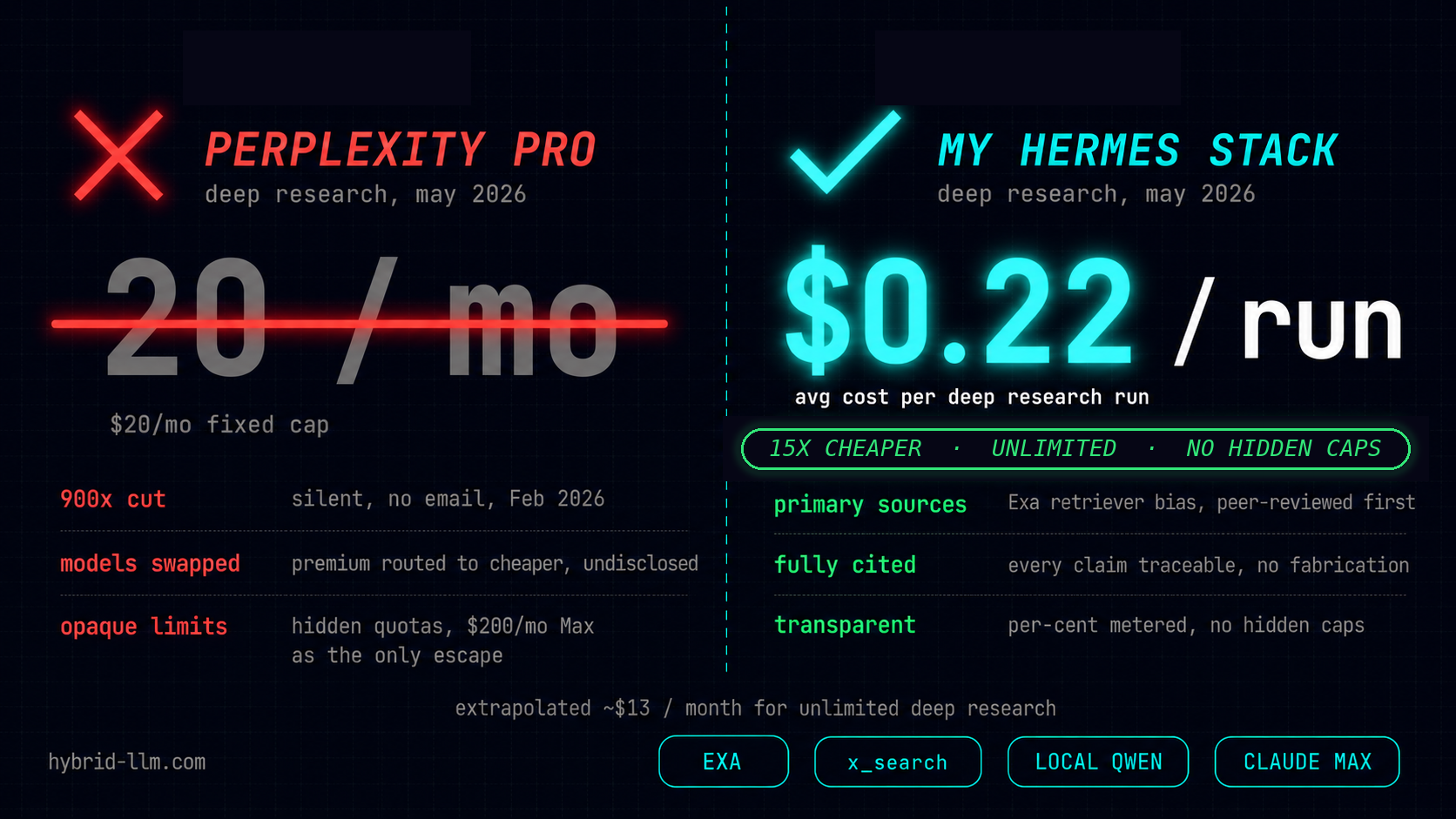

Fine-tune your model routing — Edit ~/.hermes/config.yaml to set a main model for complex tasks and a cheaper auxiliary model for memory search, summarization, and web extraction. This is where the hybrid local + cloud setup gets interesting.

Connect a chat channel — Add your Telegram bot token or Discord credentials to .env and Hermes becomes reachable from your phone. Same memory, same skills, different interface.

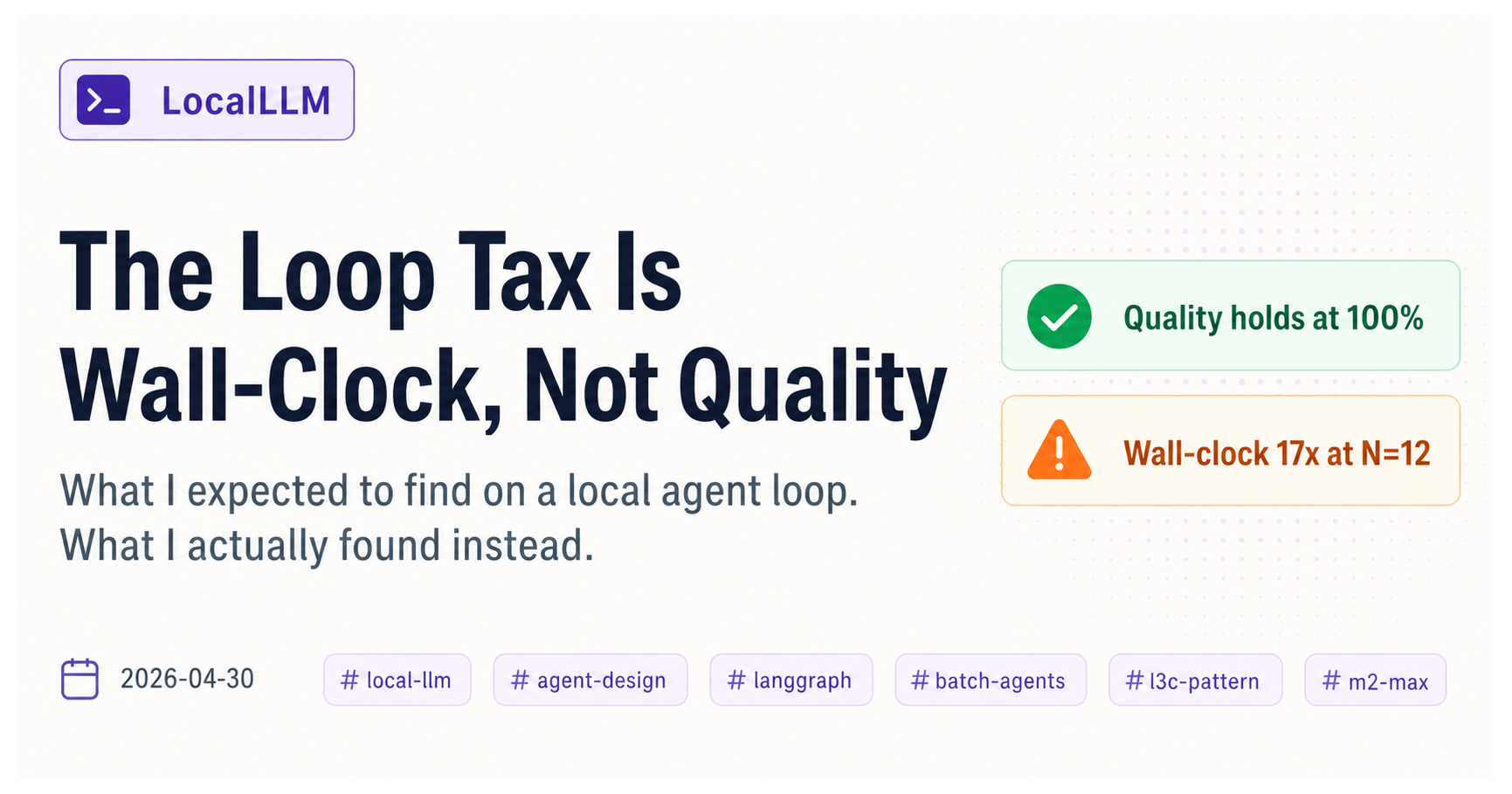

Let the skill loop run — This is the part that makes Hermes different. After you’ve used it for a few days, check hermes skills to see what it’s learned from your usage patterns. The longer you run it, the fewer steps it needs for repeat tasks.

Honest Timing

From opening my terminal to getting a working response: under 10 minutes. Most of that was deciding which Ollama model to point it at.

The “5 minutes” claim from users I interviewed yesterday? Realistic if you already know which provider you want. Add a few minutes if you’re comparing options.

Either way — it’s meaningfully faster than the OpenClaw setup I went through weeks ago. Whether the long-term experience matches up is the next thing I want to test.