Hermes Agent Looks Interesting — So I Collected Real User Stories

Every few days, someone in a local LLM thread drops the same question: “Has anyone tried Hermes Agent?”

I keep seeing it. On Reddit, on Zenn, on X. And every time, I notice something specific — the people asking are almost always current OpenClaw users.

I run OpenClaw myself. It handles my daily agent workflows, writing, scheduling, research. It works. But that recurring question got under my skin: what are people actually finding when they try the other side?

So instead of switching blindly, I did something simpler. I went and collected real stories from people who made the jump — or at least tried it.

What Is Hermes Agent, in Three Lines

Hermes Agent is an open-source AI agent framework built by Nous Research. It runs on your own machine or server, connects to your chat apps, and handles tasks like any other agent.

What makes it different: it has a built-in learning loop. Every task it completes gets distilled into a reusable “skill.” Those skills improve each time they’re used. The longer you run it, the more it knows how to do — without you writing anything.

Think of it as an agent that doesn’t just execute. It remembers, and it gets better.

OpenClaw vs Hermes: The Personality Split

Both are open-source, self-hosted AI agent frameworks. Both connect to multiple LLM providers. Both support CLI, Telegram, Discord, and more. On paper, they look like direct competitors.

But the design philosophy is different in a way that matters.

| OpenClaw | Hermes Agent | |

|---|---|---|

| Core metaphor | A toolbox — wide, modular, skill-rich | A growing teammate — deep, persistent, self-improving |

| Skill system | You write skills manually (or pull from 5,700+ community skills) | Agent creates skills automatically from experience |

| Memory | Session logs + simple persistence | 3-layer memory: working, conversational, skill-based |

| Setup feel | Powerful but config-heavy — plan for a few days | Lighter onboarding — most users report same-day productivity |

| Language | Node.js / TypeScript ecosystem | Python ecosystem |

| Best for | Orchestrating multiple agents and workflows | Running one agent that deepens over time |

Neither is “better.” They optimize for different things. OpenClaw gives you breadth. Hermes gives you depth.

What Real Users Are Saying

This is the part I actually went digging for. Not marketing copy, not comparison tables — just people describing their own experience.

“Setup took a day, not a week”

Multiple users coming from OpenClaw mentioned the same contrast. OpenClaw’s initial configuration — gateway setup, workspace files, model routing — can take several days to get right, especially for non-engineers.

Hermes users consistently reported reaching a working state faster. One developer put it bluntly: the Node.js config maze that took a week with OpenClaw was matched in a single day with Hermes. The hermes claw migrate command, which imports your existing OpenClaw config and memory, helps bridge the gap.

“It remembers things, and actually connects them”

This was the most commonly praised difference. OpenClaw does store memory across sessions — I use MEMORY.md files for exactly this. But several users described Hermes’s approach as qualitatively different.

One CTO who ran both systems for three weeks described it this way: OpenClaw remembers, but the memories sit in isolation. Hermes connects them. Its three-layer memory system — working memory, persistent conversational memory, and a skill/knowledge layer with full-text search — lets it pull context from weeks ago and weave it into current decisions.

Whether that matters depends on your use case. For long-running projects with lots of accumulated context, it sounds like a meaningful upgrade.

“Fewer tool calls, same result”

A user on Reddit described running a complex multi-step task that required over 50 tool calls in OpenClaw. In Hermes, the same task completed in 5 correctly targeted calls, finishing about two and a half minutes faster.

The implication: Hermes’s skill-learning loop may reduce trial-and-error over time, because the agent has already encoded the efficient path from prior runs.

I should note: this is one data point, and tool call efficiency depends heavily on the model and the task. But it aligns with the broader pattern people describe — less fumbling on repeat tasks.

“I feel safer running it”

OpenClaw has a reputation problem in some circles. Stories about accidental rm -rf commands and concerns about API key exposure have circulated on X and Reddit. Fair or not, it makes some users nervous about leaving an agent running unattended.

Hermes users highlighted two things: destructive command filtering is on by default, and the framework is designed with zero telemetry — nothing phones home. The execution confirmation prompts before risky operations gave people confidence to keep it running on their local machine overnight.

“Discord to local files felt like magic”

This one came from a first-time Hermes user who connected it to Discord. They sent a task request from their phone, and the output appeared as a file on their local machine. Both frameworks can do this, but the user’s point was that Hermes felt designed for this workflow from the start — not bolted on.

For anyone running agents primarily through chat platforms rather than terminal, this seems to be a genuine strength.

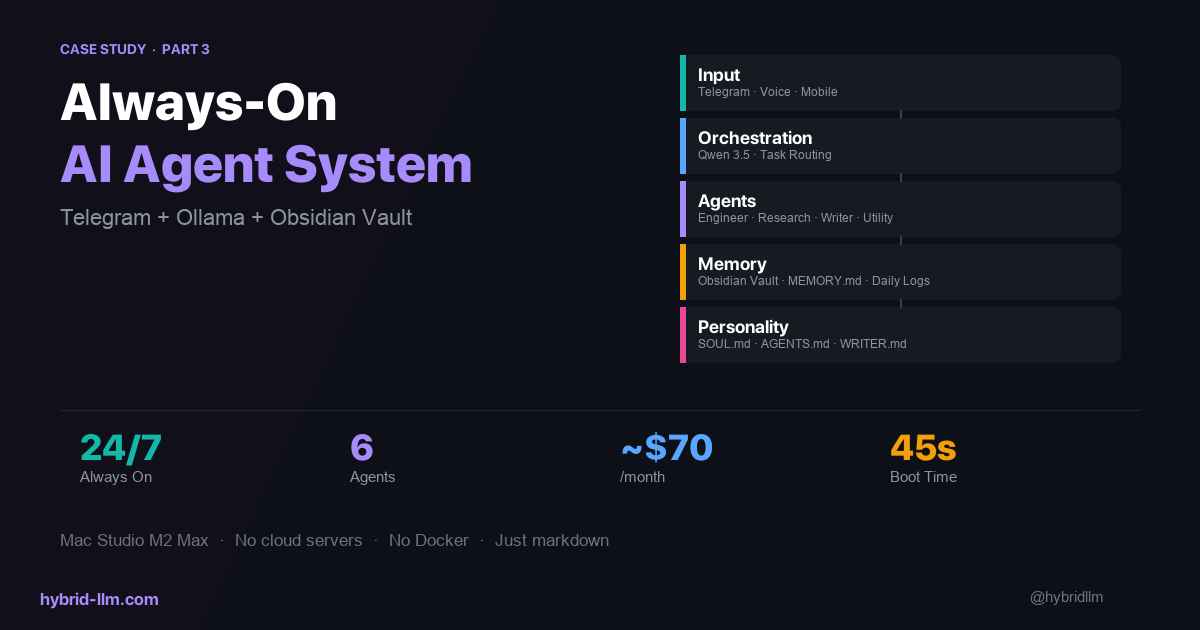

So What Does the Experience Actually Look Like?

Reading through these stories, a pattern emerges. The Hermes experience isn’t dramatically different from OpenClaw on day one. You install it, connect a model, start chatting. The real difference shows up over time.

Week 1: You’re running basic tasks. Summarize this, draft that, look up this information. It works, similar to any agent.

Week 2-3: You start noticing that tasks you’ve done before finish faster. The agent doesn’t ask for clarification it needed last time. It already has a skill for the pattern.

Week 4+: The agent starts feeling less like a tool and more like a colleague who’s been briefed. It knows your project structure, your preferences, your common requests. People describe this as the “secretary” phase — when the agent shifts from executing commands to anticipating needs.

Whether that trajectory holds up in practice is something I can’t verify from the outside. But it’s a consistent narrative across multiple independent sources.

My Honest Take (From the OpenClaw Side)

I’m not switching. At least not yet. My OpenClaw setup is working — the workflows are tuned, the memory files are organized, the cron jobs are running. Ripping that out to try something new has a real cost.

But here’s what I’m taking away from this research:

The memory architecture is genuinely interesting. My current setup relies on manually maintained Markdown files for persistence. Hermes’s automated three-layer approach could reduce a lot of the maintenance work I do to keep context alive.

The self-improving skill loop is the real differentiator. OpenClaw has thousands of community skills, but you browse and install them like packages. Hermes generates them from your actual usage. That’s a fundamentally different model — and one that gets more valuable the longer you use it.

The setup story is better. I spent days getting OpenClaw to a comfortable state. If Hermes really delivers same-day productivity, that matters — especially for people who haven’t started with either framework yet.

What I’ll probably do: keep OpenClaw as my primary workflow engine, and set up Hermes alongside it for a focused test. If the memory and skill-learning claims hold up in my environment, I’ll write about that too.

If You’re Curious

- Hermes Agent GitHub — MIT licensed, self-hosted

- Official docs — setup guides, configuration reference

- Hermes vs OpenClaw comparison (Zenn) — kun432’s hands-on walkthrough

- CTO migration story (SotaSync) — 3-week comparison review

- Reddit: “I just switched to Hermes Agent” — community discussion thread

If you’re already running OpenClaw and things are working, there’s no reason to panic-switch. But if you’ve been struggling with setup, memory management, or just wondering what else is out there — Hermes is worth a look.

And if you do try it, let me know what you find.